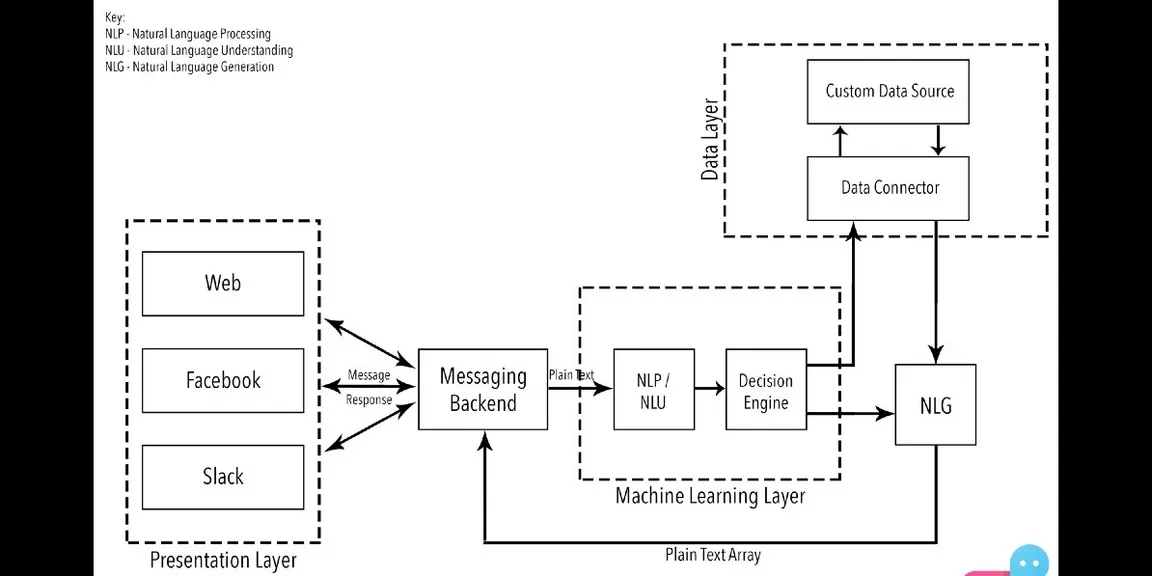

NLP, NLU, NLG and how chatbots work

Various acronyms and words are thrown around while talking about Chatbots, we take a look at the basics of this technical revolution.

To understand what the future of chatbots holds, let’s familiarize ourselves with three basic acronyms.

NLP (Natural Language Processing), NLU (Natural Language Understanding) and NLG (Natural Language Generation).

I. NLP, or Natural Language Processing is a blanket term used to describe a machine’s ability to ingest what is said to it, break it down, comprehend its meaning, determine appropriate action, and respond back in language the user will understand.

II. NLU, or Natural Language Understanding is a subset of NLP that deals with the much narrower, but equally important facet of how to best handle unstructured inputs and convert them into a structured form that a machine can understand and act upon. While humans are able to effortlessly handle mispronunciations, swapped words, contractions, colloquialisms, and other quirks, machines are less adept at handling unpredictable inputs.

III. NLG, or Natural Language Generation, simply put, is what happens when computers write language. NLG processes turn structured data into text.

Now imagine for a minute what the process for communication with another human being is like.

Your mother asks you to go buy some Tropicana 100% Orange Juice.

Your first question is how much of it does she want? 1 litre? 500ml? 200? She tells you she wants a 1 litre Tropicana 100% Orange Juice. Now you know that regular Tropicana is easily available, but 100% is hard to come by, so you call up a few stores beforehand to see where it’s available. You find one store that’s pretty close by, so you go back to your mother and tell her you found what she wanted. It’s $2, maybe $3, and after asking her for the money, you go on your way.

A chatbot follows the same process, with two fundamental differences, the channel of communication and what you’re talking to.

I’ll give you a step by step breakdown based on the most fundamental principles of AI/Chatbots.

Architecture Diagram for Chatbots ( https://goo.gl/3fRAvH )

1. You find a product on Facebook’s Messenger and for the sake of consistency, let’s say it’s the same bottle of Tropicana. You only ever see the presentation layer and send the bot a message that is picked up by the backend saying you want some Tropicana.

2. Using Natural Language Processing (what happens when computers read the language. NLP processes turn text into structured data), the machine converts this plain text request into codified commands for itself.

3. Now the chatbot throws this data into a decision engine since in the bots mind it has certain criteria to meet to exit the conversational loop, notably, the quantity of Tropicana you want.

4. Using Natural Language Generation (what happens when computers write a language. NLG processes turn structured data into text), much like you did with your mother the bot asks you how much of said Tropicana you wanted.

5. This array of responses goes back into the messaging backend and is presented to you in the form of a question. You tell the bot you want 1 litre and we go back through NLP into the decision engine.

6. The bot now analyzes pre-fed data about the product, stores, their locations and their proximity to your location. It identifies the closest store that has this product in stock and tells you what it costs.

7. It then directs you to a payment portal and after it receives confirmation from gateway, it places your order for you, and voila in one to two business days, you have 1 litre of Tropicana 100% Orange Juice.

The biggest difference between chatbots and humans at this point of time though, is what the industry calls empathy understanding.

Chatbots simply aren’t as adept as humans at understanding conversational undertones.

For example, there’s a very large difference between the statements,

“We need to talk baby!” and

“babe, I think we need to talk….”

Which while immediately apparent to a human being, is difficult for a machine to comprehend. Progress is being made in this field though and soon machines will not only be able to understand what you’re saying, but also how you’re saying it and what you’re feeling while you’re saying it.

-------------------------------------------------------------------------------------------------------------------------------------