Story of Anima Anandkumar, the machine learning guru powering Amazon AI

Anima Anandkumar pioneered the research of finding global optimal in non-convex problems, a big pain point in machine learning. Our protagonist for this week’s Techie Tuesdays, Anima is an academician who represents the best of both worlds—industry and academia. She has contributed significantly to major AI and ML projects at Amazon.

This is a treat for all machine learning enthusiasts. In my two hours of conversation with Anima Anandkumar, Principal Scientist at Amazon Web Services, I was injected with the most potent dose of technical knowledge. Not that I didn’t expect it while talking to an ex-faculty of UC Irvine (soon to be an endowed professor at Caltech), known for her research on non-convex problems (in deep learning).

Our Techie Tuesdays protagonist of the week, Anima has worked towards establishing a strong collaboration between academia and industry. She follows an unconventional style of teaching, the one she would have loved as a student. Her love for mathematics and specifically for tensor algebra has made her solve the toughest of the problems in machine learning with much ease.

Here’s the story of a machine learning guru who could have also been a Bharatnatyam dancer.

Also read - The untold story of Alan Cooper, the father of Visual Basic

The family of engineers

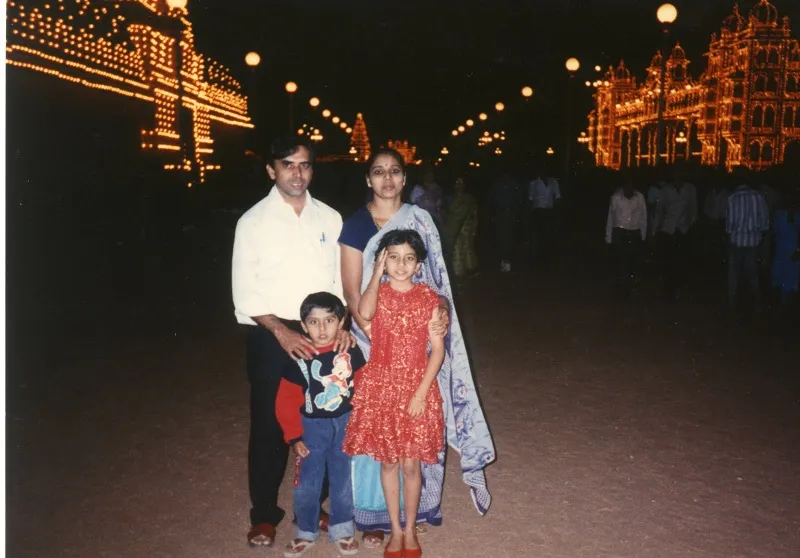

Anima was born in Mysore to engineer parents. Her father is a mechanical engineer (IIT Madras) and mother is an electronics engineer. Her maternal grandfather was a math teacher. Anima naturally had a liking for science and math. She says,

I felt math was a natural language to converse with and to understand the world around.

Anima went to Marimallappa High School in Mysore where the school principal, MN Krishnaswamy, a math teacher, taught the students the philosophy of life as well. Anima learnt why we solve certain problems rather than blindly memorising them. As a kid, she was always amazed at how science would get us to the moon and beyond. She says, “To me it's magical but at the same time, something that we could understand and build up on more.”

Anima’s father is an entrepreneur and he used to take her to the industry to show how the machine works. She remembers going to many industry expos as a kid. She also picked up dancing at an early age of three. She recalls,

“My mother took me to the dance class just to watch but I started picking up from there. I did Bharatnatyam for many years and took exams in it. In school, I was dancing and choreographing. I always thought that if I don't end up in tech, I would have become a dancer.”

Anima ranked fourth in the state in her class 10th examination. She had heard about IIT-JEE from her father.For Anima, getting into the IITs was the pinnacle of being good in Math and Science.Since there weren’t any coaching institutes for IIT-JEE in Mysore then, she took some distant coaching, referred to college books (like Feynman lectures in Physics). She says, “I treasure those times because I could get a concrete understanding of how to take this exam as a challenge. A lot of self-thinking and introspection helped me do that.”

Life at IIT Madras

Anima joined IIT Madras and opted for Electrical Engineering. She was a part of the organising team for the cultural and technical festival at the college. But the highlight of her college time was co-founding the Robotics Club at IIT Madras. In her final year at college, she along with a fellow batchmate pitched the idea of Robotics Club to the Director. The club was not only supposed to help students unwind and relax but also give them more hands-on tech knowledge.

Anima believes that students at IITs have entrepreneurial spirits. She recalls an incident when they came across a villager who had built a very practical device to de-husk the coconuts with minimal efforts. She along with her friends helped him commercialise this.

First signs of research

After third year, Anima spent summers at IISc under Professor Y B Venkatesh where she was introduced to Gabor filters. This helped in her research in image processing (efficient multi resolution). That's also when she decided to pursue a PhD. She applied for universities across the US and got a call from Professor Lang Tong from Cornell University (who eventually became her advisor).

Anima’s B.Tech. project was based on the idea of using fractional fourier transform for efficient image processing. It was under Professor Prabhu in Electrical Engineering department. That was the start for her to think about statistical signal processing and machine learning.

Distributed Sensor Networks a.k.a. Internet of Things

Anima was little scared in the beginning. She says,

Until now, you had to solve a problem where you were given a question. But now, you had to come up with a question as well.

In the very first week, her advisor Lang Tong gave her a book on probabilistic methods by Noga Alon, and asked her to read it and present it which she did for three subsequent Fridays. Anima thinks that a lot of researchers don't spend enough time brainstorming on what is it that we need to solve.

After her first year, she was looking at the area of distributed sensor networks which is now known as 'Internet of things'. She worked on solving questions like—how do we interconnect lots of devices when they have limited battery and communication capabilities but they all have a common goal. She says,

“With network sensors, we aimed at coming up with algorithms that can do with light weight communication mechanisms and be able to transmit information.”

The key was to bring in the statistical model. That was one of her first works where she showed that the bandwidth requirement (for transmitting data from sensors) can be drastically reduced by doing a statistical inference rather than sending in raw data.

She then wrote a longer and more expansive journal article about this work and called it type-based communication. She says,

The idea is that the sensors should be sending in counts of how many entries did they see of each type rather than the actual entries. This gives much better, more efficient bandwidth saving because there's no need to decode the raw data from each of the sensors.

Work on the opportunistic spectrum access

Anima later looked into the problem of opportunistic spectrum access where she tried to answer the question—can we transmit the communication by licensed players more efficiently? Can we have opportunities for secondary players to transmit when the primary users are not utilising the bands? This is called cognitive radio access. Anima formulated the problem of exploring - which bands are likely to be free(statistically) and exploiting them.

This never took off but the idea was to convince the agencies to allow secondary users also to transmit in these license bands as long as they can prove that they are not going to interfere with primary users.

Related read - Meet the co-creator of Julia programming language, Viral Shah

The IBM stint

Anima worked in the networking group at IBM. There are lots of transactions going through a legacy system where we lose track of what each part is doing, what configurations it has, and what it is meant to be doing. Anima worked on solving this problem of tracking end to end service level transactions. Legacy systems don't have an in-built instrumentation support. Anima designed the frameworks to model these transactions going through a state transition model. They’ll also join time stamp footprints in different places together to track them end to end. Her team filed a patent to how we can selectively retrofit legacy systems.

PhD to machine learning

Towards the very end of her PhD, Anima found herself to be on the crossroads of industry and academia. She still enjoyed Math and wanted something that's grounded in theory but where she could herself take it all the way to practice.

In her final year of PhD, she got in touch with Alan Willsky, Professsor at Massachusetts Institute of technology (MIT). She was introduced to the idea of probabilistic graphical models which is about modeling dependencies between different variables in the data, i.e. how is the data collected in one sensor related to the data collected in the other. These correlations could be efficiently captured through graphs (dependency graphs). That's when Anima switched to machine learning because she wanted to deal with the core problem of modeling lots of data efficiently.

She wanted to design learning algorithms that can process at scale and make efficient inferences about the underlying hidden information. She recalls,

That was the start when I felt like I could also program these algorithms, try it out on real data and be able to see the tangible results which showed how beautifully the theory worked.

She found a whole range of applications where she could apply this—computer vision, time series analysis (like stock market), time-varying data. She interviewed with many companies while graduating with her PhD and even received some offers. But she accepted the offer from UC Irvine to be a faculty because it allowed her to do both research and work in the industry. She wanted to advise students and teach in her own way to encourage more students to take up sciences.

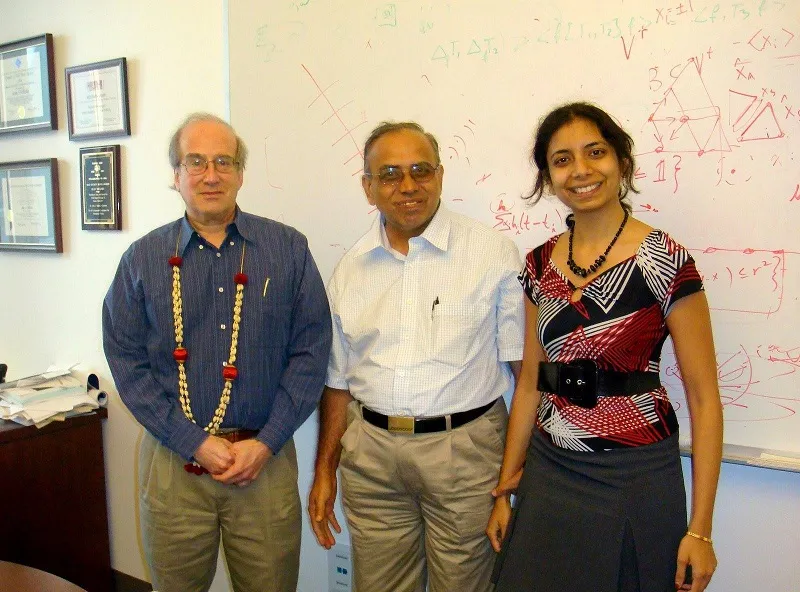

Research at UC Irvine

When Anima joined UC Irvine as a faculty, that time was the beginning of the big data revolution. People were trying to figure out what were the algorithms that would allow us to process data at scale and also make useful inferences. She started working on the problem of latent variables. These are the hidden variables which are always hidden to us when we make any number of observations. She says, “There are some hidden aspects that we may never be able to identify because we just don't have enough information. But the ones which are limited by the computing capabilities can be found.”

She worked on hierarchical categorisation which includes identifying the hidden tree that related the different variables. For example, in image classification of a house, there can be indoor, outdoor and bedroom, drawing room, and bed, sofa and so on. The idea is to have the algorithms detect these classifications from data. Anima came up with efficient, fast algorithms that enabled to do this practically well and in theory established that this model is guaranteed to give you a right answer under a certain set of conditions.

Of tensors and tensor algebra

Tensors and tensor algebra give rise to a lot of interesting mathematical properties. With tensors, the notion of multi-linearity gives rise to much richer operations. This includes hidden phenomenon like learning about hidden communities in social networks, i.e. figure out the common interests between two people who're friends on a social network and then use it to suggest friends. It enables to process large graphs with millions of nodes within an hour using these algorithms.

Anima says,

Kruskal who gave the famous algorithm on minimum spanning tree which is one of the classical algorithms in computer science, also gave elegant results on what can you identify using tensors (called the uniqueness theorem). Since then, there's a treasure trove of problems. This has deep connection with deep learning on how we can resolve non-convex problems at scale.

Solving the non-convex problem

Anima wanted to push the bar and see if tensors can solve the problem of reinforcement learning. She wanted the algorithms to be adaptive. She says, “Notion of intelligence comes from being able to adapt to changes in the environment. Most learning algorithms are passive, i.e. you already have a bunch of data which you're processing and no change happens as the algorithm is learning.”

In the case of reinforcement learning, you try to learn what the environment is but even as you're trying to do that learning you're potentially changing that environment. For example, when the (Google) AlphaGo won against the nest Go player, it had to adapt based on the player’s moves but the player would change his/her moves based on AlphaGo's reactions every time.

If you break down this problem, you’ll realise that there's an objective which is to win in the game in this case. So how to learn just enough to win the game? One of the classically hard problems in reinforcement learning has been setting up of partially observable Markov decision process (POMDP). It says that you don't observe everything in the environment that are hidden variables. In real world, almost always, the robot will see its immediate surroundings and not what’s happening in the entire environment. So, the aim was to learn about these hidden variables and how they impact the actions you take.

Using tensor algebraic methods, you can come up with efficient and fast ways to learn about hidden variables in the POMDP models. You can also look at relationships at different time points (what happened now vs some time ago and so on) and use that to infer about the behaviour of the hidden variables of the environment. It helps in balancing between the two aspects—to explore the environment and to maximise your objective.

Also read – Meet Vijaya Kumar Ivaturi, the ex-CTO of Wipro, who’s ‘not business as usual’

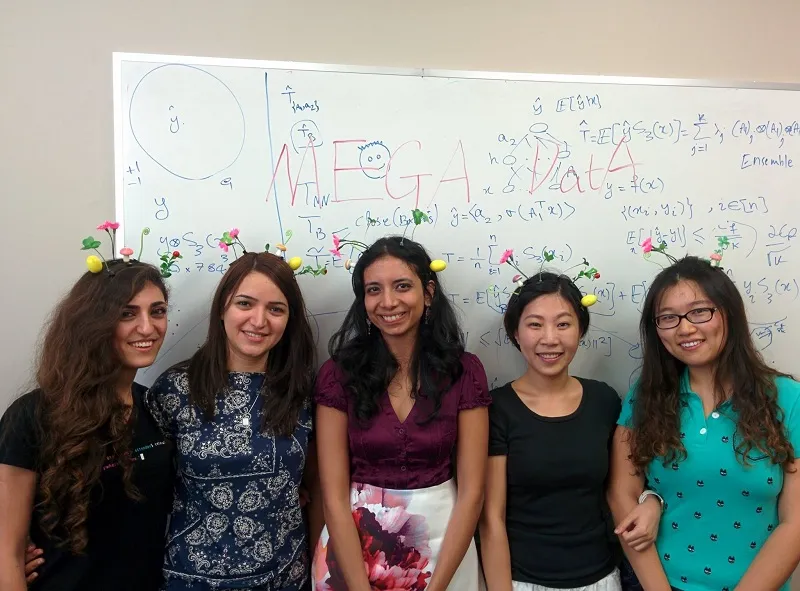

The professor I wanted to be

Anima makes sure that all her students feel like there is something to get out of her teaching. She finds it easier to have smaller classes which are a lot more interactive. She believes that learning really happens in a nonlinear fashion. She says, “When you try understanding one topic, there are so many other related topics. The danger with a sequential classroom type system is that it cannot easily make those connections.”

She followed an inverted classroom model where there were no lectures and participation of students was most important. She says, “It is important to make it interesting but have enough foundation. You can point out to the resources like YouTube videos and give assignments that are more applications oriented.”

Amazon and AI

Anima had earlier worked with Google, Microsoft, and Adobe (and won faculty awards as well) before joining Amazon. She is a big proponent of open source and all her work at Microsoft Research is open source.

In 2016, she got her tenure at UC Irvine and the next logical step for her was to go to the industry and to be able to build a robust software that could run on a range of different systems. She felt the need of a very strong engineering framework and industry for that. As she was exploring different companies, Alex Smola, one of Anima’s collaborators and a professor at CMU, told her about his plan to move to Amazon and have a group there. Given that they had worked very closely together and their research philosophies aligned well, Anima decided to join as Principal Scientist at Amazon AI in October 2016.

She has launched a range of AI services and AI tools since joining. The mandate at Amazon is to provide large-scale algorithms on the cloud infrastructure. She says,

The AI services are managed services that enterprises can employ without running machine learning themselves. Then there are AI engines like the MXNET Apache open source engine we are developing that data scientists will use. They have very efficient scaling. As these models are getting much bigger and there is need for more and more computation, we need efficient software frameworks.

She has been adding a lot of tensor capabilities into this deep learning framework and also enabling the topic models.

The AI tools

Anima shared a list of AI tools which she’s working on and which are offered by Amazon currently:

- Amazon Rekognition – It’s a deep learning based image analysis service which is helpful in image recognition, scene recognition, and face recognition. It lets enterprises get annotations in an automated manner in real time.

- Amazon Lex – It’s an interface that chatbot developers can utilise to quickly design chatbots for a variety of different applications. Lex lets customers harness the knowledge graph of Alexa.

- Amazon Polly - It is a text to speech service that processes large amounts of text in real time. The deep learning engine MXNet allows very efficient scaling in terms of running this on 100sof GPUs and get about 90 percent efficiency.

Amazon is enabling a lot of capabilities to do sophisticated algorithms at scale using MXNet.

More tensor algebra and machine learning

Tensor algebra helped Anima think about what makes learning hard. It turns out that a lot of non-convex problems, at first glance, appear to be very hardbut as we discover more structure in them it turns out that there are very simple ways to solve them. She believes that a lot of machine learning is headed where we make use of useful structures to simplify the difficulty of solving non-convex problems. She says, “In fact people have been trying out these different network architectures. They are trying ways to overcome hard non-convex problems. But I would like to think fundamentally—where is the hardness coming from and how do we resolve it. That would help us speed up deep learning and be able to solve even harder problems with more scalable models.”

You may also like – Sandipan Chattopadhyay — the statistician behind the 160x growth of Justdial

Decision making and values

Anima tries not to jump to conclusions. She says,

As humans, we have the tendencies to go by the gut and make those immediate decisions. I try to think about asking for additional information. Specifically, if they are important decisions with longer-term implications.

Her decision making also depends a lot on the repercussions of those decisions.

She takes enough risks when it comes to research. She says, “Even if the idea fails I learn something new and I gain some good perspective on it.”

So, it depends on the context in which the decisions are made.

Anima believes in acquiring knowledge and being able to have the freedom of thinking while trying to make unbiased decisions and inferences. She wants to be empathetic to others. She adds, “Once I became faculty, the responsibility of guiding students felt enormous. I want to make sure that I guide them to not just finish the project but develop independent thinking.”

She still keeps up with dancing in different forms. That's a big part of expression to her. She loves running, swimming, hiking, and surfing as well.

Advice to AI & ML startups

The only advice Anima would give to somebody with a startup is to have a clear vision of the product and some advantages in data. She adds, "There would be some private data that would be hard for others to get easy access to and that creates a barrier. It’ll give a competitive advantage. Otherwise, this is really a level playing field. All the tools are open source and it's very easy and quick to get machine learning system started. Amazon and other companies are even offering them on the cloud."

Women in tech—views on the skewed numbers and proposed reservation

When Anima went to IIT Madras, it was the first instance she realised the fewer number of women around her(the female to male ratio at IIT Madras was 1:20 then). From there on wherever she went, the number kept reducing further. But she thinks that the reservation for women in IITs will be a gross mistake. She explains,

Even though I missed having more women in IIT, the women who got in there were remarkable since they overcame other barriers and still performed well; it gave a lot of confidence. Though I do wish there were more women and I'm always looking how to improve the diversity, it should be towards helping women overcome barriers (without compromising on performance/quality).

She even created a petition on change.org against the reservation for women in IIT entrance examination calling it regressive and counterproductive. She says, “This would be very unfair to the women. They'll feel that they are coming in with a special category and that will lead to even more discrimination. I've been through this system where I was the only woman but was treated equal. Other thing is to ask if the entrance examination is the right model or should you use a more comprehensive way to admit people and if diversity is one of these.”

She feels that the challenge is much more cultural in the US where many women think that Math and Science are not for them. Though much has been done to overcome that, there's still a lot of room for improvement. She adds, “Computer Science in Caltech has 43 percent women graduates whereas the national average is less than six percent.”

Living the childhood dream

Anima is the youngest endowed professor at Caltech (joining in 2017 summers). Sharing her time between Amazon and Caltech, her goal is to think about truly collaborative industry-academia.

At Caltech, the JPL (Jet Propulsion Laboratory, NASA) connection and her previous work (which is useful there) can get her live her childhood dream of working with NASA as well.

You can connect with Anima on Twitter and LinkedIn.